Low Code Service Integration for Your Business – Along with large companies, small businesses actively use diverse information systems to mechanize and support their processes. So, the question of integration and low-code ETL solution for other requests is related to virtually every enterprise, regardless of size. Unlike large businesses, small businesses don’t always have in-house designers and the skill to attract experts from outside. So, customization of present solutions and creation of requests without growth, spoken in the Low Code/No Code idea, become more and further famous.

Based on these concepts, the business of the global company Visual Flow, which has advanced an amenity for integrating web applications, is based. For example, when totalling an appeal to the CRM system, Visual Flow will arrive at your client’s associates in the mail or SMS-mailing amenity and make a task in the task manager, assigning its implementation to the answerable employee. As the demand for their product raised, the service developers faced the problem of scaling. How exactly this remains complete, we will debate.

Table of Contents

Low Code Service Integration for Your Business – What Can Low-Code Do?

The possibility of low-code tackles for batch and streaming data processing without needing to write code in Scala physically does not finish. Using low code in data lake growth has become a standard for us. You might say that solutions on the Hadoop stack recurrence the growth route of classical DWH grounded on RDBMS. Small code tools on the Hadoop heap can answer both data handing out tasks and tasks of building final BI interfaces. You must note that BI can be implicit as a representation of data and its removal by business users. We frequently use this functionality to build logical stages for the monetary sector. Among other things, with the assistance of low-code and Datagram, it is likely to solve the problem of outlining the beginning of data flow objects with atomicity to specific fields (lineage).

The low-code tool gears interfacing with Apache Atlas and Cloudera Guide for this resolution. The developer must record a set of objects in Atlas vocabularies and refer to the written things when building maps. The mechanism of data provenance following or object dependence study saves a lot of time when it is essential to adjust calculation algorithms. For example, this feature allows you to manage legislative changes when building financial statements comfortably. After all, the healthier we understand the inter-form dependencies in the context of complex sheet matters.

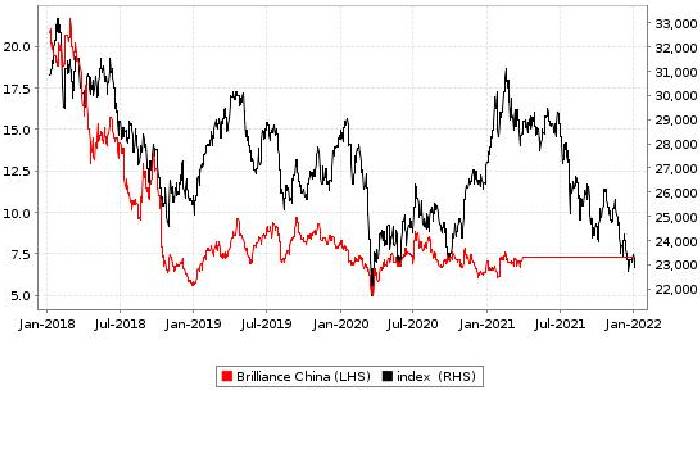

Low Code Service Integration for Your Business – Statistics Brilliance & Low-code

The preservation of data-checking pipeline applications for research company schemes didn’t affect the effectiveness and speed of mainstream data calculation. The conversant Apache Airflow uses to orchestrate autonomous data-proof streams. As each data manufacture step was ready, a distinct part of the DQ pipeline was to run in parallel. It is considered good practice to monitor data quality from its inception in the analytics platform. Having metadata information, we can already check if the primary conditions – not null, restraints, foreign keys – are seen from the moment the data enters the preceding layer. This functionality is implemented based on mechanically made data quality mappings in Datagram. The coding, in this case, is also based on model metadata. In the Media scope project, the projecting stood complete with Initiative Architect metadata. Thanks to the pairing of the low-code tool and Enterprise Architect. The following checks were automatically generated:

- Checking for the presence of “null” values in fields with the “not null” transformer;

- Checking for the presence of copies of the primary key;

- Matching the external key of the entity;

- I was checking the exclusivity of a string by a set of fields.

Of course, the auto generation of checks must come gradually. In the context of the defined project, this remained run by the following steps:

- DQs fixed in mapping;

- DQs in the form of separate huge mappings containing a whole set of checks under a single unit;

- Worldwide parametrized DQ maps that take metadata and business-proof data as input.

Possibly the main benefit of creating a parametrized checks amenity is the reduced time it takes to bring functionality to the production environment. New quality checks can avoid the classic pattern of code delivery indirectly through the development and testing environments:

- We can form the Entire meta data method automatically when the model changes in EA.

- Data accessibility checks (defining if any data is accessible at a point in time) can be made based on a reference storing the predictable timing of the next data portion by the object.

- There are no risks of straight shipping scripts to extensions as such. Even with a syntax error, the maximum we may face is the non-execution of a single check. Since the thread of scheming data and the threat of running quality checks are divided.

- The DQ service is forever running in the production environment and is ready to start when the next data batch attains.

Conclusion

Low Code Service Integration for Your Business – The advantage of low code is straightforward. Designers do not have to grow an application from scratch. And the computer operator, freed from additional tasks, gives the result more quickly. The speed, in its turn, frees up other time resources for solving optimization matters. So, you can sum up a better and faster solution in this case. Low code is one method to meet your needs if you have tight deadlines, loaded business logic, lack technological skill, and need to accelerate time to market.

However, if you know all the drawbacks of the selected system and the benefits of using it, yet are in the leading majority, move to a small code without fear. Moreover, its change is unavoidable – as any evolution is inevitable. There’s no rejecting the importance of old development tools. But in many cases, using low-code answers is the best way to progress the effectiveness of the tasks at hand.